Lightweight tooling for creating custom raspberry pi software deployments is surprisingly sparse. In this post we go over the reasons such a thing is useful and how to make it.

Part 1: Identifying automation timing and strategy

A relatively common strategy for replicating raspberry pi devices is to simply copy the disk image onto a second SD card and generate new keys. Indeed, we did that here at BES as we got into a type of work that involves numerous raspberry pi devices.

This quickly becomes inefficient for three reasons:

- The functionality cobbled into the system is undocumented and difficult to discover, so no one person knows about all the modifications and new people have a hard time getting up to speed

- Any new functionality or fixes added in more recent projects must be laboriously ported to older devices in an error-prone manual process to receive the benefits

- Because the disk image itself is cloned, insidious disk corruption may be cloned with it and amplified over time

At the prospect of making another iteration of this process and having every indication this is going to continue to be part of our business, I decided it was time to automate. It was clear that we needed a way to build our tools and settings directly into a fresh disk image. Pi-gen, the tool that generates the official raspbian disk images we started with, seemed like the obvious choice. But I thought: wouldn't it be nice to be able to run commands as if on the pi, but without having to connect the keyboard and monitor? This would involve extracting the pi's root filesystem and chrooting into it and running stuff in an emulator and seemed like it might be tricky to implement cleanly.

I had just finished with updating some internal processes with Docker, and a connection occurred to me. Docker images are environments built from commands from a single file; if one were able to build a raspberry pi disk image the same way, the file would describe to anyone who read it all the additions to the disk image and double as documentation. This seemed like such a good solution to me that I started researching its viability immediately.

How would one go about this? The Pi uses ARM architecture and the binaries on the Pi filesystem are unable to run on common desktop computers without emulation. Could I really run arm binaries from a Docker image? Would I have to cross build the Docker image I wanted? Too many questions - time to experiment.

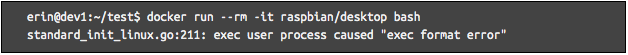

I found a raspbian Docker image on Dockerhub, so I started my research by simply running that.

No good so far. The ARM binaries in that image can't be directly executed by the x64 processor in my computer. Searching online further, I found some hints that this limitation could be overcome. I installed a package and quickly found that the ARM binaries could be executed in docker after all.

Turns out, it uses the linux kernel's binfmt_misc feature to run the process with an appropriate emulator opaquely (https://github.com/multiarch/qemu-user-static)! On ubuntu 20, the package 'qemu-user-static' sets it up automatically. This feature passes a foreign binary to another program, in this case an emulator, when someone tries to start a process with it. Qemu is even able to reuse the x64 kernel on the host system to handle syscalls from the ARM binary without loading an ARM kernel. Suddenly this pursuit not just seemed possible but - with the ability to run ARM binaries during a Docker build as if they were native - actually more elegant than expected.

Part 2: A step by step guide on disk image creation with Docker

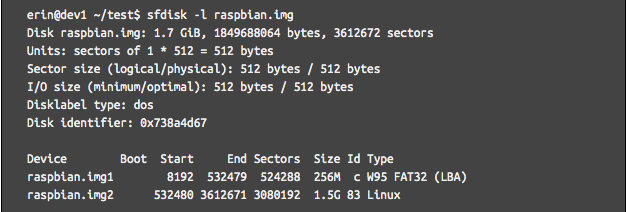

Let's go through the process of creating a disk image to do a proof of concept. First, download a raspbian disk image from https://www.raspberrypi.org/downloads/raspbian/, renamed to “raspbian.img” in this example, to use as the base.

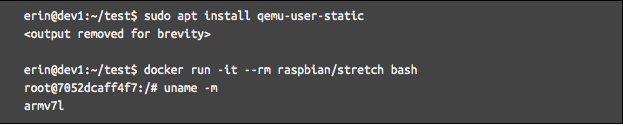

The disk image contains two partitions. The boot partition is partition 1, and the root partition is partition 2. We want to modify the root partition, so it needs to be copied out of the disk image. losetup will create a loop device from a disk image contained within a file which can then be mounted to access its files.

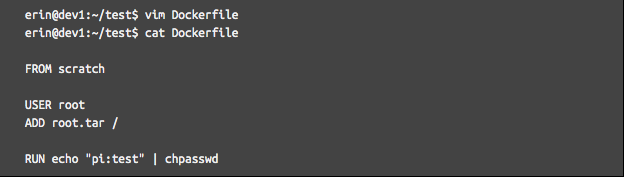

The root filesystem is now contained in the archive root.tar. This is where Docker comes in. Let's create a Dockerfile that uses this entire filesystem and also modifies something as a way of testing if it works or not. In this example, it modifies the default user’s password to ‘test’.

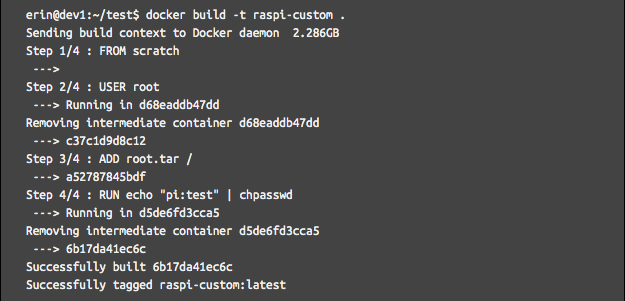

The `FROM scratch` statement tells Docker to start with an empty image. `ADD root.tar /` will extract the tarball into the image's filesystem at /. Let’s try building it.

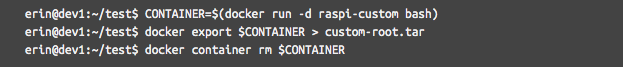

It’s still a little hard to believe it runs an arm executable so seamlessly! Now the modified file system is hanging out in the Docker image tagged raspi-custom. Next, this file system needs to be exported back out into a tar file. This is done with the `docker export` command.

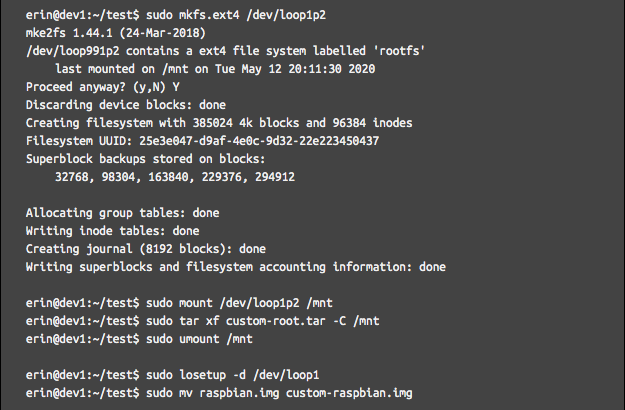

Now the custom root is contained in custom-root.tar. We need to overwrite the original root filesystem with the modified one. The next commands will reformat the root file system and copy the modified one into its place.

It's finished! When booting this custom image the first time it will resize itself to the size of the disk as raspbian normally does, but when logging in for the first time the password will be 'test' as per the change made in the Dockerfile.

To create the automation, this technique just needed to be translated into script commands that can step through it automatically. The script I made to automate this can be found at https://github.com/eringr/pidock for your convenience. Happy pi-ing!

Interested in learning more about Boulder Engineering Studio? Let's chat!

Previous Blog Posts

Solidworks vs Onshape - A Brief Overview |

Building Raspberry Pi Disk Images with Docker: A Case Study in Software Automation |

|

|

.svg)